The inference engine.

Formultimodal AI.

The most bleeding-edge engine for optimized multimodal models. Purpose-built for image, video, audio, and vision — the workloads defining the next decade of AI.

In partnership with

The most bleeding-edge engine for optimized multimodal models. Purpose-built for image, video, audio, and vision — the workloads defining the next decade of AI.

In partnership with

Google's flagship T2I — fastest path to photoreal

BFL's production T2I baseline. Broad style range.

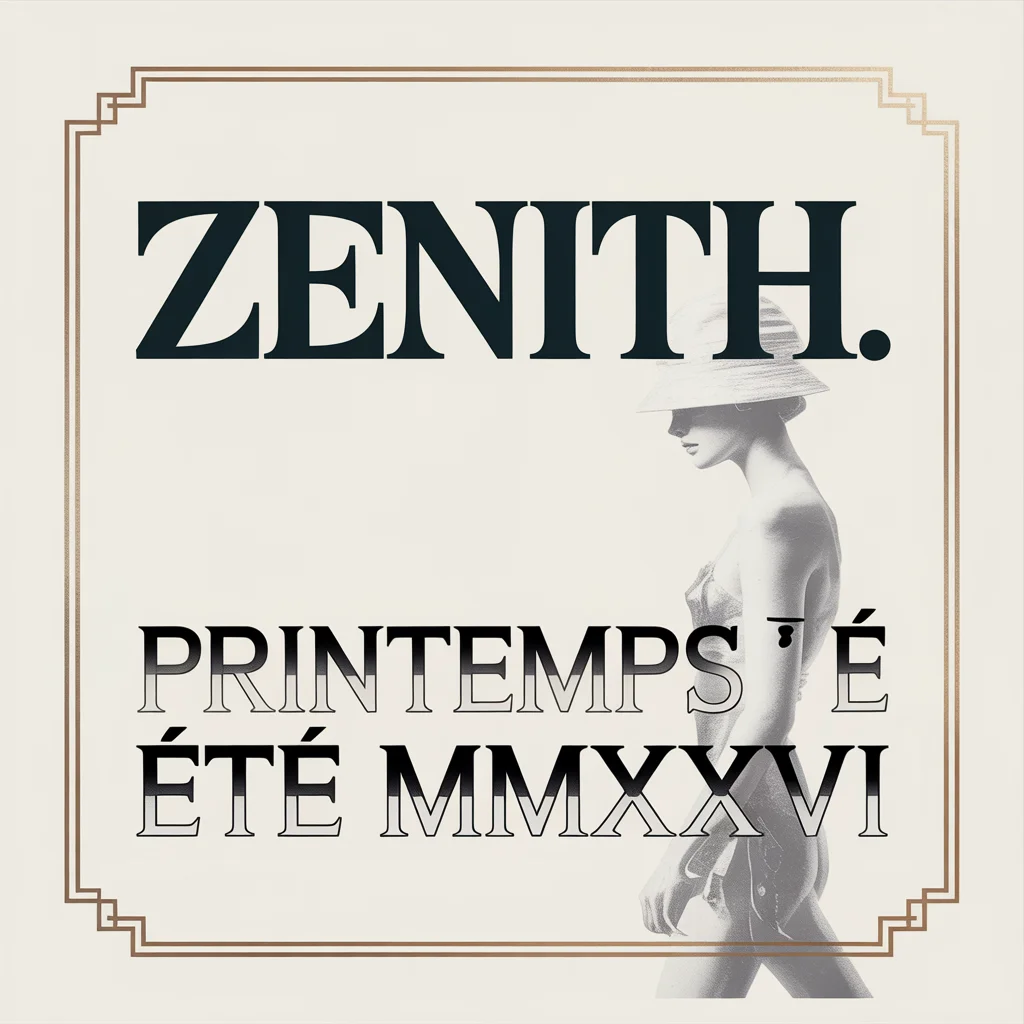

Best-in-class typography for posters & ads.

ByteDance's multi-reference image model.

Cinematic stylized video, 1080p, native 4K.

Director-grade video with native synced audio.

Segment anything in image and video. Open weights.

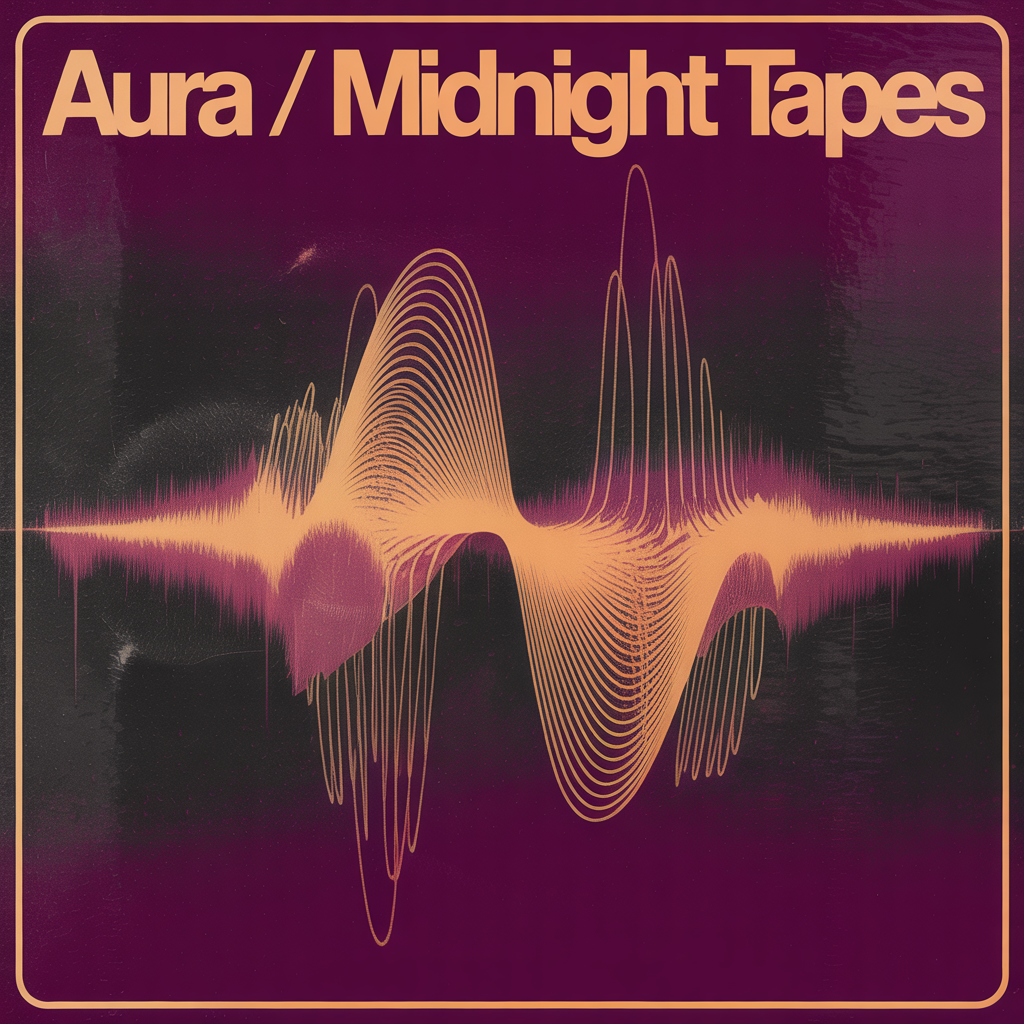

TTS with 70+ languages and inline emotion tags.

One engine, every modality. Image, video, audio, vision — optimized for speed, quality, and cost. Same outputs as the source API, at lower latency and lower spend.

Frontier T2I from Nano Banana 2, GPT Image, FLUX, Imagen, Seedream — same weights, same outputs, sub-2-second latency on most.

Image models →Cinematic 5–10s clips with native audio. Kling 3.0 Pro, Veo 3.1, Seedance 2.0, Hailuo, Wan 2.2 — all on one queue, one bill.

Video models →ElevenLabs v3 TTS with 70+ languages and inline emotion tags. Streaming output for IVR, podcasts, and real-time agents.

Audio models →SAM 3.1 for object segmentation and tracking. Frontier VLMs for document, video, and multimodal understanding at production speed.

Vision models →Instruction-driven image editing with FLUX.1 Kontext — restore, restyle, swap. Identity preservation across multi-step edits.

Editing models →Bring your own LoRA or fine-tune; serve open-weights models at frontier-grade throughput. Wan, FLUX dev, Qwen Image — same SDK shape.

Open-weights →We work hand-in-hand with NVIDIA on inference. That gets us early access to their newest accelerators and CUDA stack — and gets our researchers and engineers in the room with theirs to optimize Infer for whatever ships next. The work flows both ways.

Bring your weights or fine-tune ours. Reserve capacity on the same hardware Infer runs on — Hopper today, Blackwell as it ships. Optimized stack, dedicated nodes per tenant, uptime measured against production SLAs.

Frontier-grade throughput today. The H200 cluster runs the bulk of our serverless catalog; reserve dedicated nodes for hot LoRAs, regulated workloads, or 24/7 production loads.

Next-generation compute as it lands at the foundry. First capacity is reserved for enterprise customers and frontier-model partners; talk to us before the queue closes for the next wave.

Every model in the catalog is priced at least 20% under its source API. Same weights, same outputs, same SLA — the savings come from how Infer runs the model, not from cutting features.

Type-safe SDKs. OpenAPI spec. Streaming. Webhooks. Everything you need to ship fast — and nothing you don't.

1import infer 2 3# Initialize with your API key 4client = infer.Client() 5 6# Generate with any of 60+ models 7result = client.run("flux-schnell", { 8 "prompt": "A futuristic city at sunset", 9 "width": 1024,10 "height": 102411})1213# That's it. No infra, no queues.14print(result.url)

1import Infer from "@infer/sdk"; 2 3// Initialize with your API key 4const client = new Infer(); 5 6// Generate with any of 60+ models 7const result = await client.run("flux-schnell", { 8 prompt: "A futuristic city at sunset", 9 width: 1024,10 height: 1024,11});1213// Type-safe. Streaming-ready.14console.log(result.url);

1package main 2 3import "github.com/infer/sdk-go" 4 5func main() { 6 client := infer.NewClient() 7 8 // Run any of 60+ models 9 result, _ := client.Run("flux-schnell", infer.Params{10 Prompt: "A futuristic city at sunset",11 Width: 1024,12 Height: 1024,13 })14 fmt.Println(result.URL)15}

1# Works with any HTTP client 2curl https://api.infer.sh/v1/run \ 3 -H "Authorization: Bearer $INFER_KEY" \ 4 -H "Content-Type: application/json" \ 5 -d '{ 6 "model": "flux-schnell", 7 "prompt": "A futuristic city at sunset", 8 "width": 1024, 9 "height": 102410 }'1112# Response streams back over HTTP/213# X-Infer-Latency: 1243ms

Reserved capacity, private endpoints, compliance packages — available as an add-on for teams operating at serious scale. Not included in Pro; talk to us and we'll scope what you need.

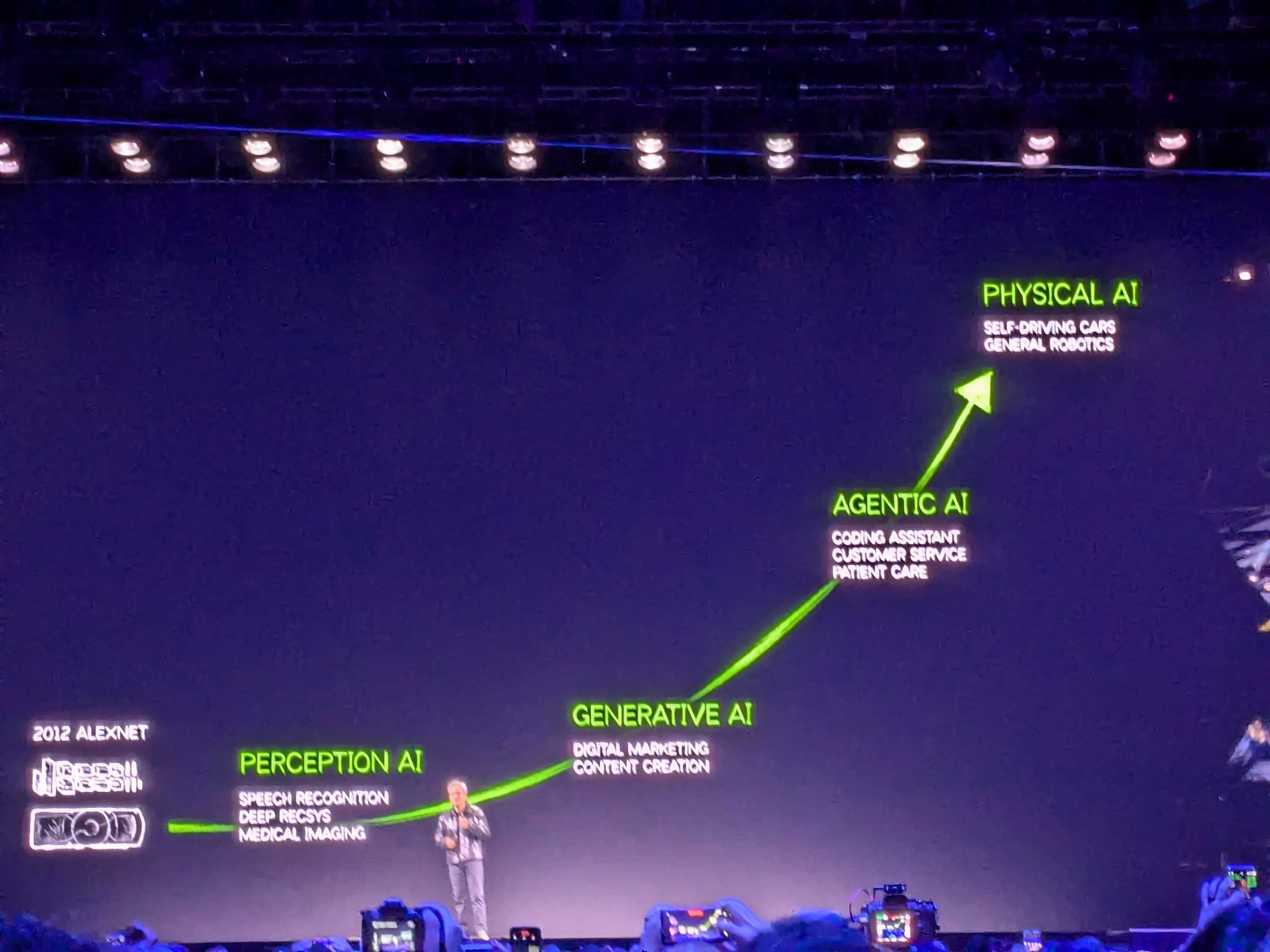

Reka Edge is frontier-level edge intelligence for physical AI — a 7B vision-language model fast and lean enough to run on a drone, a Jetson, a car, or a wrist. Real-time spatial reasoning and object localization without cloud connectivity.

Reka built the model. Infer serves it. The same engine powering every other model in our catalog — one SDK shape, one queue, one bill — with the throughput-per-dollar that makes physical-AI deployment economically viable.

The security-review and procurement questions, handled up front.

When a new frontier model lands, it lands here. We track every leaderboard worth tracking — VBench, the Artificial Analysis arenas, MMMU-Pro, OpenVLM — and serve the top of each the day they ship.

View the leaderboards→