Video editing tools

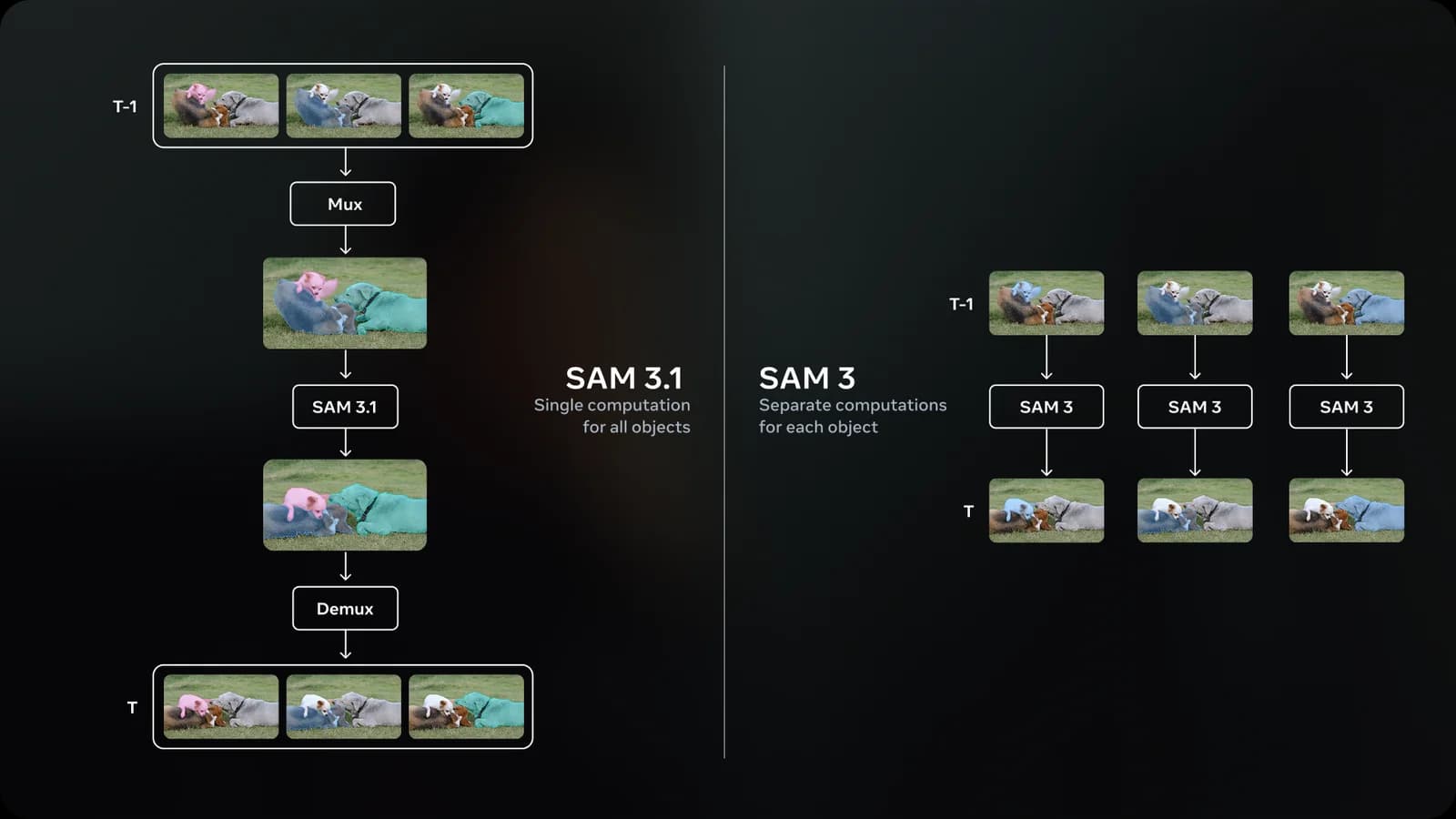

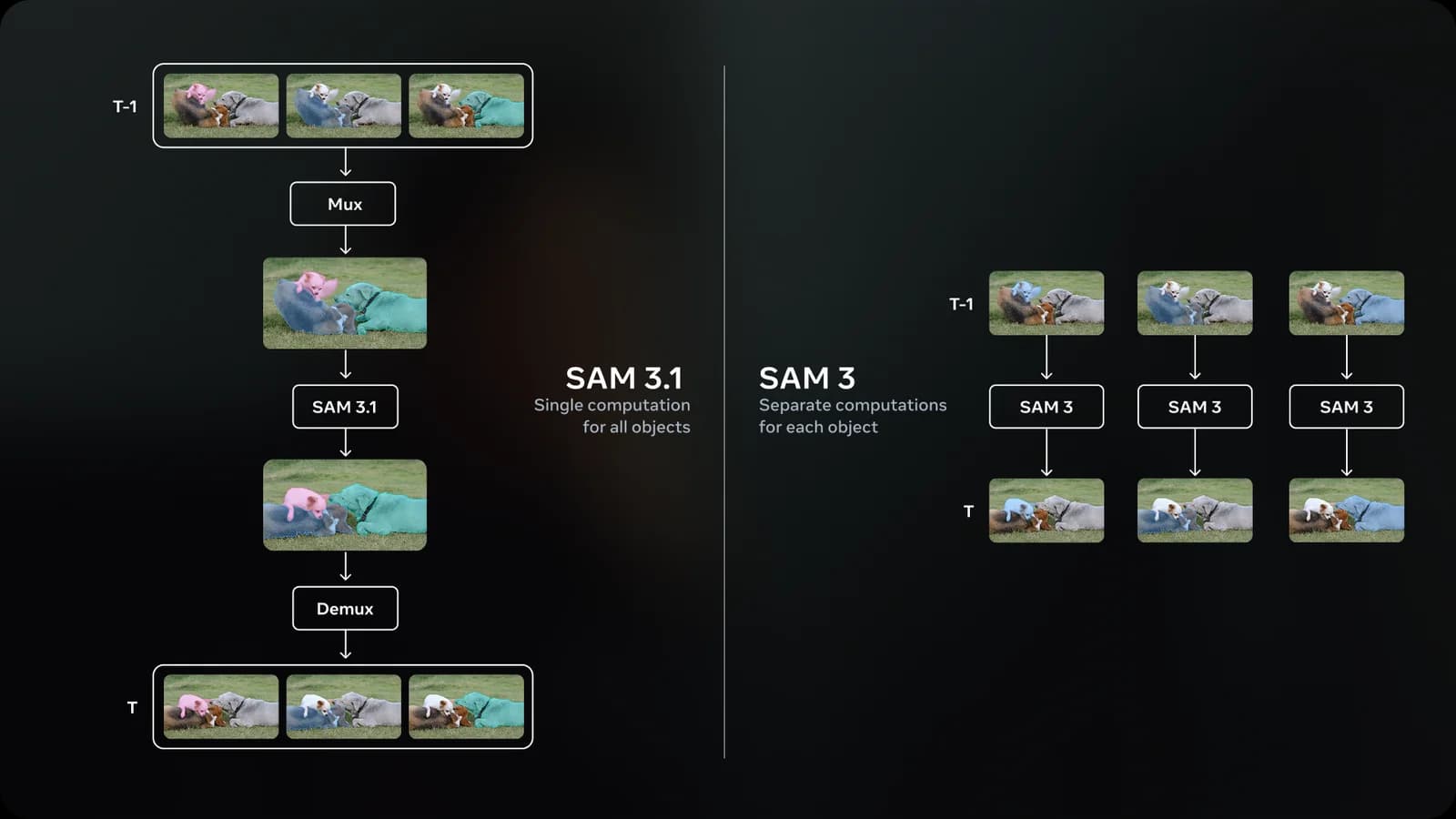

Subject isolation for masking, color grading, and effects work. SAM 3.1's video tracking holds across cuts and occlusion.

EXAMPLEClick a subject on frame 0, get per-frame masks across the entire clip.

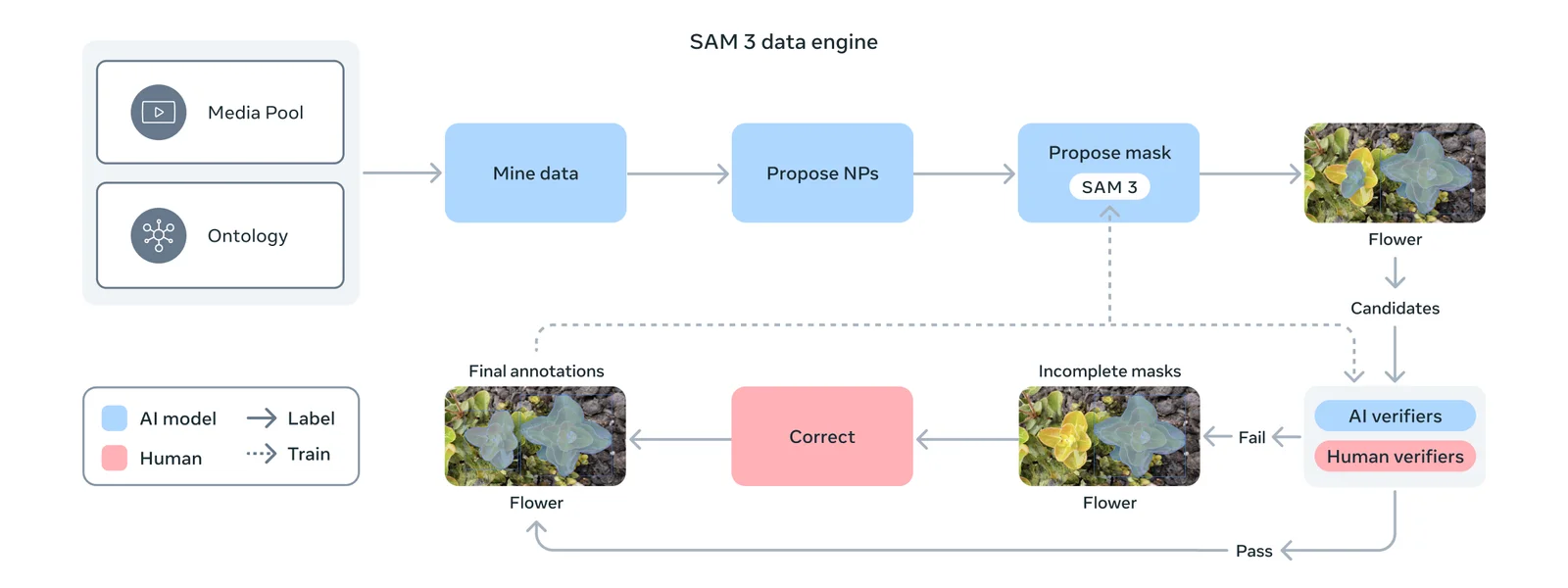

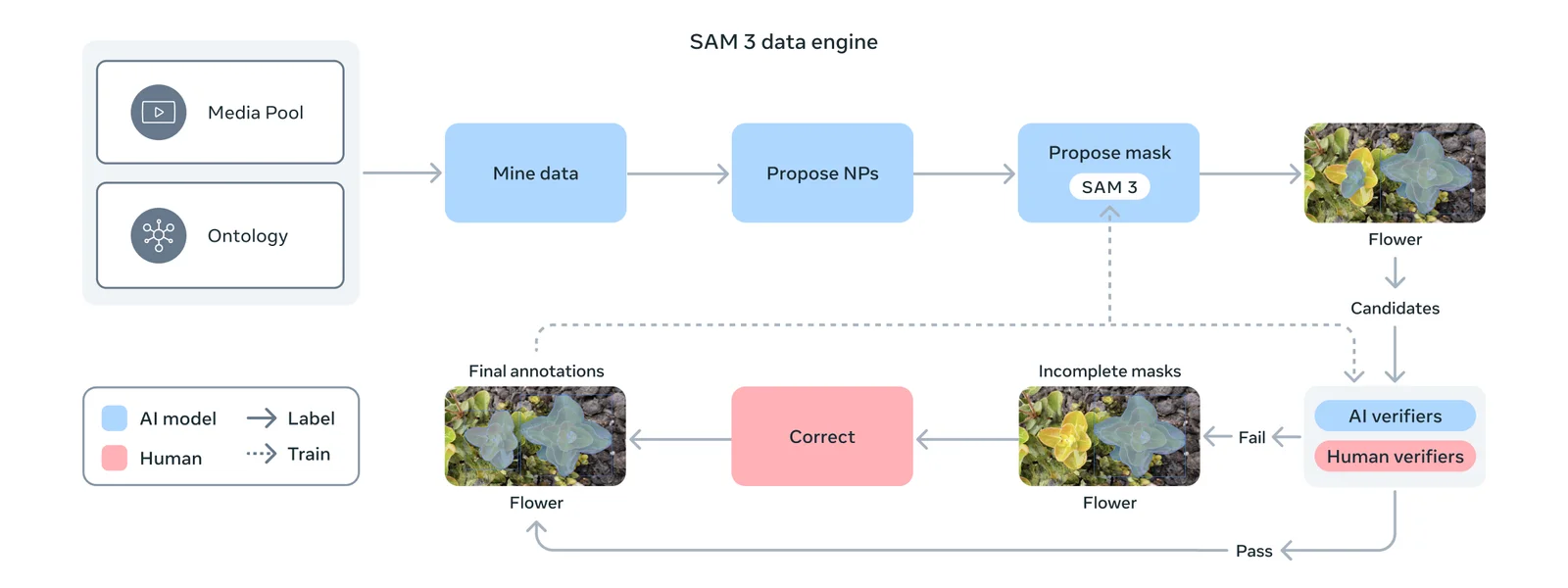

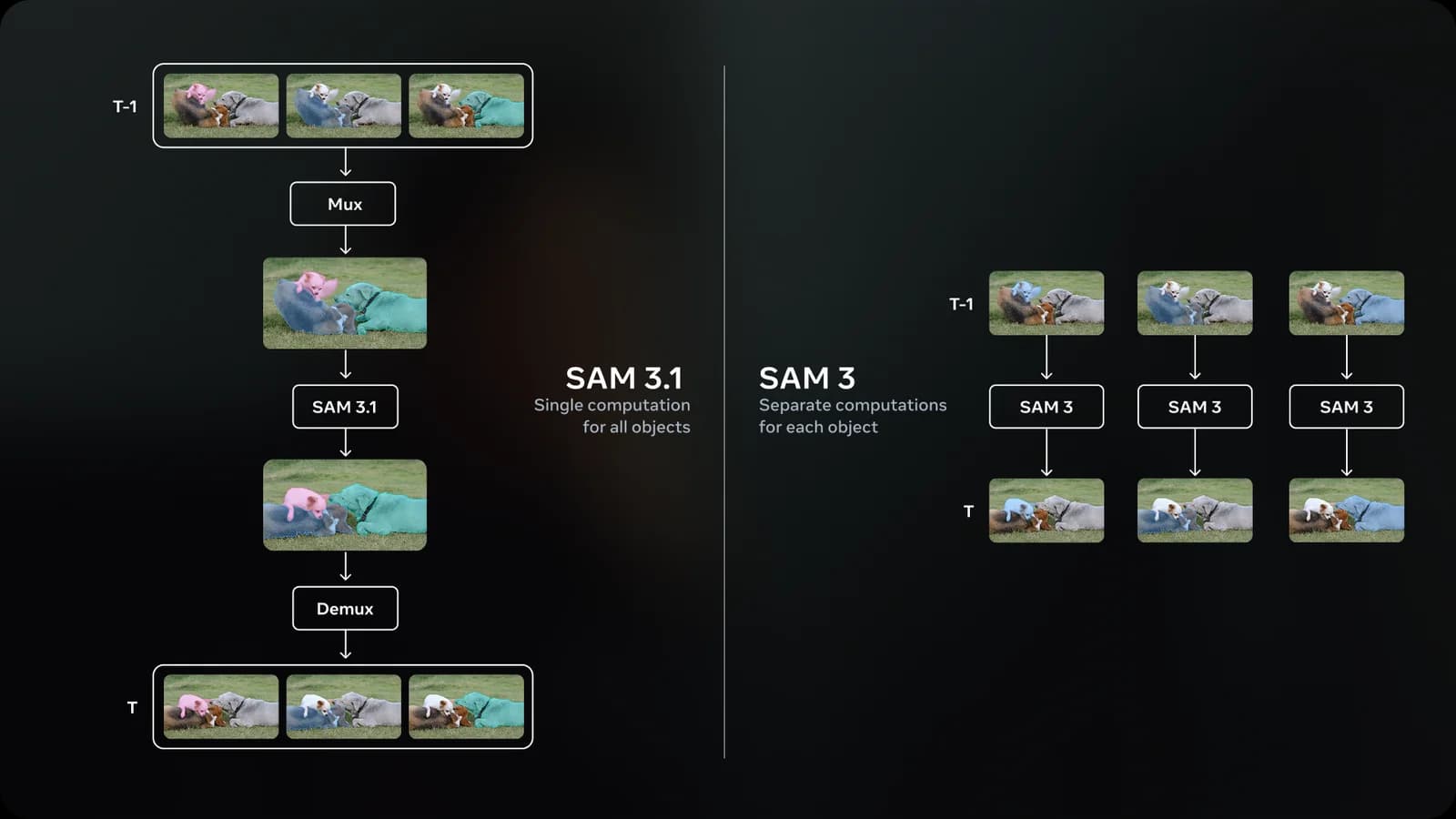

Meta's latest segmentation model — find, segment, and track objects across images and video from a single prompt. Object Multiplex lets you pull many subjects in one pass instead of one-call-per-object. Open weights, fine-tunable, sub-cent per frame.

Subject isolation for masking, color grading, and effects work. SAM 3.1's video tracking holds across cuts and occlusion.

EXAMPLEClick a subject on frame 0, get per-frame masks across the entire clip.

Catalog-scale background isolation with higher accuracy than commercial alternatives at a fraction of the cost.

EXAMPLERemove background from this product photo, preserving fine fabric edges and contact shadows.

Microscopy, astronomy, medical imaging — anywhere precise segmentation matters and labels are scarce. The FathomNet collaboration shows the underwater pipeline.

EXAMPLESegment all visible species in this underwater video frame (FathomNet pipeline).

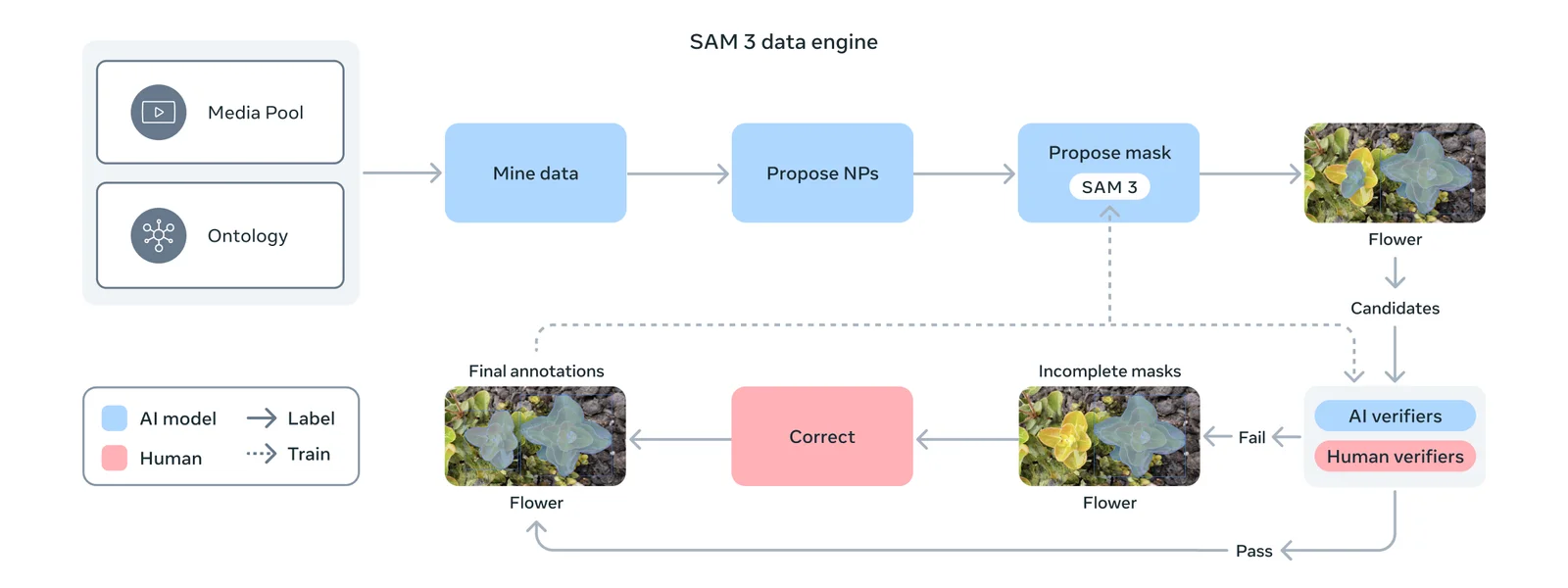

Bootstrap large-scale segmentation datasets at sub-cent cost per frame. Useful for in-house ML training data prep.

EXAMPLEGenerate polygon segmentation for every distinct object in this image.

A live cross-section of the model's range — portraits, products, typography, illustration, fashion, cinematic. Hover any tile to pause and read its prompt.

Pay only for successful generations. No idle, no minimums, no per-seat. Volume discounts kick in at 10K req/mo.

One key. One bill. One SDK shape — across 100+ models. Free credits on signup, no card required.